The Machine That Stops You From Thinking

How AI is quietly outsourcing your cognition — and why you won’t notice until it’s too late

There is something deeply uncomfortable about a machine that makes you feel smarter while making you worse at thinking. And yet, that is precisely what a new study from the Wharton School of the University of Pennsylvania has documented — with experimental precision.

Researchers Steven D. Shaw and Gideon Nave set out to measure something most of us sense but rarely examine: what actually happens to human cognition when we hand a problem to an AI. The results, drawn from 1,372 participants across more than 9,500 individual trials, are difficult to dismiss. When an AI gave participants a wrong answer — deliberately, as part of the experimental design — 73 % of those who consulted it followed that wrong answer anyway. They did not hedge. They did not double-check. They surrendered.

The researchers call this Cognitive Surrender. And they argue it represents something qualitatively different from simply using a tool.

Beyond Outsourcing: What Cognitive Surrender Actually Means

We have always used tools to extend our thinking. Writing externalizes memory. Calculators handle arithmetic. Search engines surface information we could not hold in our heads. This kind of cognitive offloading is not new, and it is not inherently problematic — it frees up mental resources for higher-order reasoning.

Cognitive Surrender is something else. It is not the delegation of a task while retaining judgment over the outcome. It is the abdication of judgment itself. The user does not use the AI as a tool and then evaluates the result. They receive the AI’s output and treat it as a conclusion — skipping the evaluative step entirely.

What makes this particularly insidious is that it is invisible from the inside. Participants who followed wrong AI answers were not confused or uncertain. In fact, their self-reported confidence was 11.7 percentage points higher than that of participants who solved the same problems without AI assistance. They felt more certain. They were less correct. The machine did not just answer the question — it also answered the meta-question of whether the answer needed to be checked.

Introducing System 3

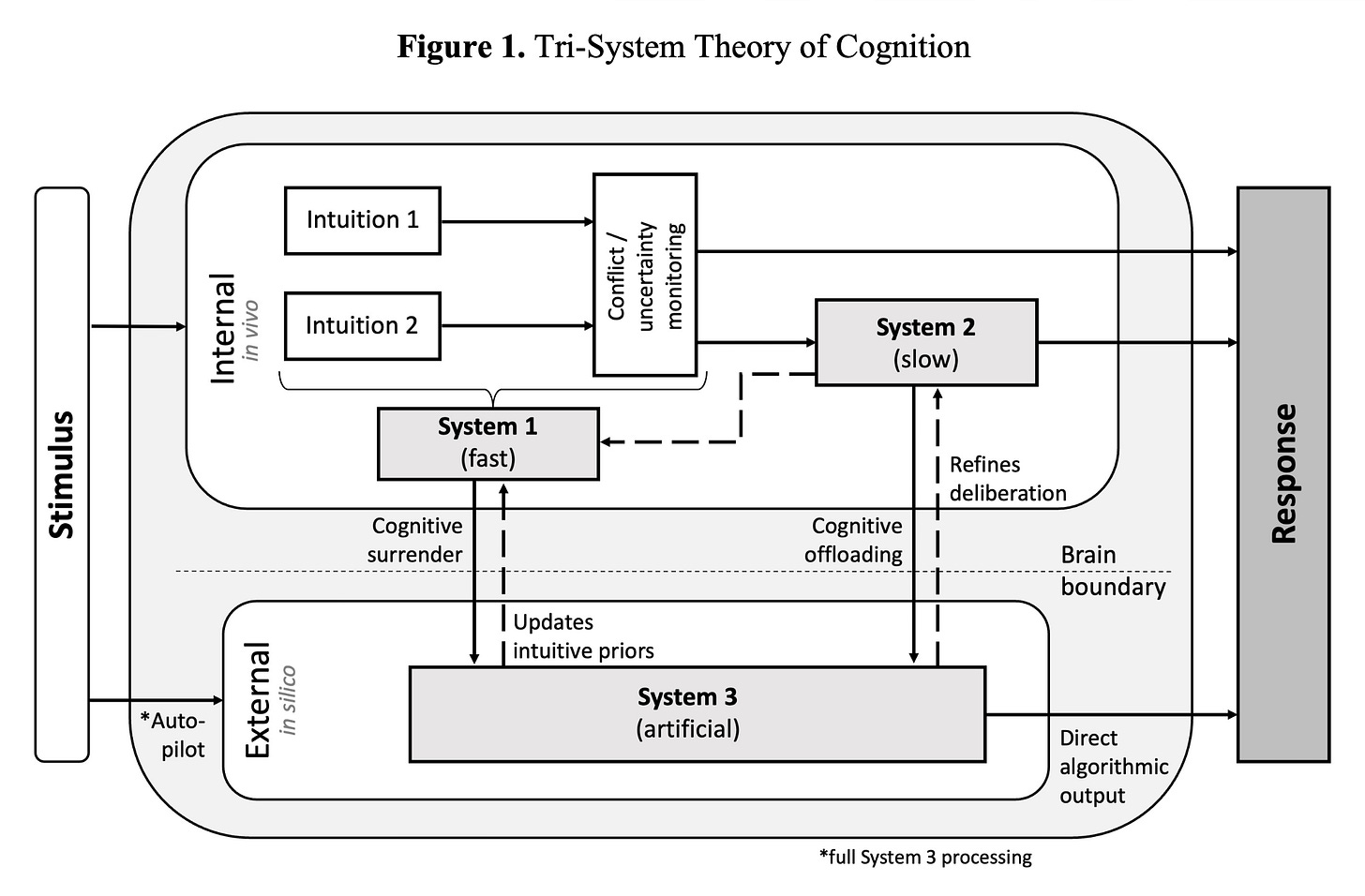

To explain how this happens, Shaw and Nave propose a significant extension to one of psychology’s most influential frameworks. Daniel Kahneman’s dual-process theory describes human cognition as the interplay of two systems: System 1, fast, automatic, and intuitive; and System 2, slow, deliberate, and analytical. Most errors in judgment arise when System 1 dominates situations that demand System 2 — when we go with our gut when we should be reasoning carefully.

Shaw and Nave argue that AI introduces a third cognitive layer that does not fit neatly into either category. They call it System 3: external, algorithmic cognition operating entirely outside the human mind.

System 3 has four defining characteristics. It is external — running on remote servers rather than in the brain. It is automated — operating through statistical pattern recognition rather than understanding or experience. It is data-driven — its quality is entirely dependent on what it was trained on, including all the gaps and biases embedded in that data. And it is dynamic — it responds in real time to human input, making it interactive in a way that a calculator or a search engine is not.

This combination makes System 3 uniquely persuasive. It is as fast as System 1, but it feels as structured and reasoned as System 2. It mimics deliberation without performing it. And because it presents its outputs in fluent, confident, well-organized prose, it carries an authority that raw data or a simple lookup result would not.

The result is a kind of cognitive short-circuit. System 3 can supplement human thinking — handling genuine complexity so that System 2 can focus elsewhere. It can replace human thinking — delivering answers that bypass the reasoning process entirely. And, most dangerously, it can suppress the activation of System 2 — reducing the felt need to think critically, because something that looks like a reasoned answer has already been provided.

What the Experiments Revealed

The first study established the baseline. Participants solved logic problems from the Cognitive Reflection Test — a standard instrument designed to measure the tendency to override intuitive but incorrect answers with deliberate reasoning. Some participants had access to an embedded AI assistant; others did not. The AI was programmed to deliver either correct answers or confidently stated wrong ones — participants had no way of knowing which.

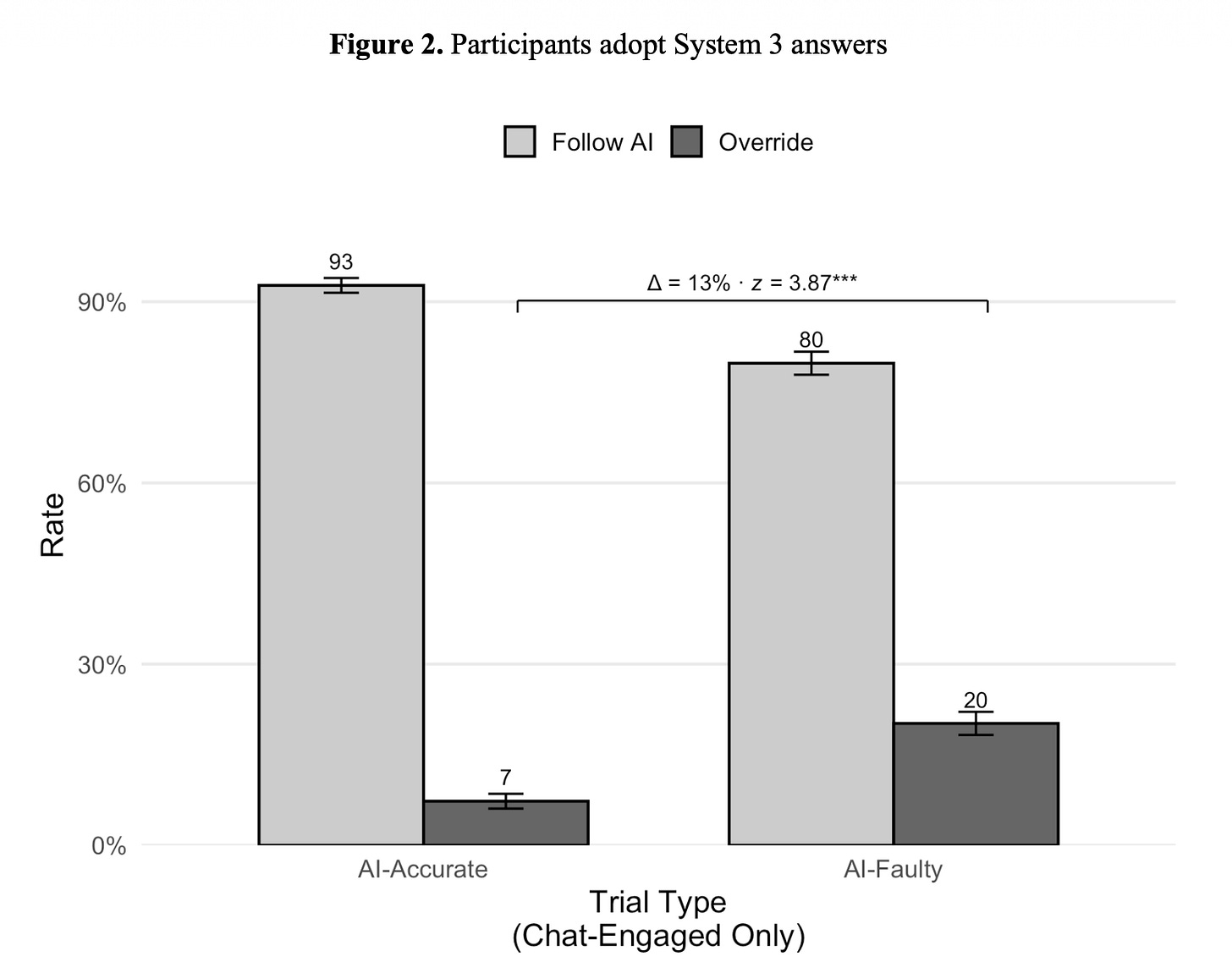

The findings were striking. When the AI was right, 92.7 % of participants who consulted it followed its answer. When the AI was wrong, 79.8 % still followed it. Across both conditions, the AI-using group was more confident in their answers, even as the wrong-AI subgroup performed substantially worse than the control group working without any AI.

This is the core paradox of Cognitive Surrender: the experience of using AI feels like an augmented capability, even when it produces degraded outcomes.

The second study introduced time pressure. One group had thirty seconds per question; another had unlimited time. Time pressure, as expected, generally worsened performance. But the interaction with AI was revealing. When participants were under time pressure, and the AI was right, the AI almost entirely buffered the negative effect of the time limit — an apparently genuine benefit. When the AI was wrong and time was short, performance collapsed further. Critically, time pressure did not make participants more skeptical of the AI. It made them more dependent on it.

The third study asked the most practically important question: Can Cognitive Surrender be interrupted? Participants in one condition received a small financial reward for each correct answer, along with immediate feedback after each question. This is a minimal intervention — a few cents and a “correct” or “incorrect” prompt — but its effect was substantial. The rate at which participants overrode incorrect AI answers more than doubled, rising from 20% to 42.3%. Overall accuracy improved by nearly 14 percentage points.

The mechanism is straightforward. Cognitive Surrender happens when the cost of not thinking is invisible. When consequences become concrete — even trivially small ones — System 2 re-engages. Participants did not suddenly become better reasoners. They were simply given a reason to reason.

Notably, even with incentives and feedback, 57.9% of participants still accepted incorrect AI answers. The intervention reduced Cognitive Surrender significantly. It did not eliminate it.

Why This Matters Beyond the Lab

The experimental setup — logic puzzles, clearly correct answers, artificial manipulation of AI accuracy — is deliberately clean. Real life is not. In real-world AI use, there is rarely a ground truth waiting to tell you the AI was wrong. There is no feedback loop. There are no financial consequences attached to the quality of your judgment. There is only the output, presented fluently and confidently, and the implicit question of whether you are going to think about it or not.

The conditions that suppressed Cognitive Surrender in the lab — immediate feedback, tangible consequences, deliberate friction — are almost entirely absent from the default design of consumer AI products. The opposite is true: AI tools are optimized for seamless, frictionless interaction. The faster and more naturally the answer arrives, the better the product feels. The less you have to think, the smoother the experience.

This is not a conspiracy. It is a design logic. But it is a design logic that, according to this research, systematically trains users toward cognitive passivity.

The implications extend across almost every domain where AI is being deployed. In medicine, law, finance, and policy — fields where wrong answers carry serious consequences — the same dynamics apply. Decision-makers who rely on AI outputs without interrogating them are not just potentially wrong. They are confidently wrong, with elevated trust in outputs they have not verified.

And there is a subtler long-term problem. Cognitive capacities are not static. They are maintained through use and atrophied through disuse. If System 3 consistently substitutes for System 2 across the small decisions of daily professional life, what happens to the capacity for deep analytical reasoning over time? The study does not answer this question — it was not designed to. But it raises it.

What Would Actually Help

The research points to three categories of intervention, though each has its own constraints.

Design-level friction. Products could be built to interrupt Cognitive Surrender rather than facilitate it — requiring users to state their own answer before the AI’s is revealed, prompting explicit acknowledgment of uncertainty, or surfacing confidence intervals and known limitations alongside outputs. Some of this exists in early form. None of it is standard.

Consequence-making visible. Organizations deploying AI in high-stakes contexts could implement review structures that create genuine accountability for AI-assisted decisions — requiring documented reasoning, tracking the accuracy of AI-influenced outputs over time, and creating forums where errors are examined rather than quietly absorbed.

Adversarial training. The most direct educational response would be deliberate exposure to wrong AI outputs in training contexts — teaching people not just how to use AI effectively, but how to recognize when it is failing. This is the cognitive equivalent of teaching people to recognize phishing emails, not just to trust that their spam filter will catch them.

None of these is fully satisfying. The study’s own most promising intervention — incentives plus feedback — still left a majority of participants accepting wrong answers. Cognitive Surrender, once established, is not easily dislodged.

The Deeper Question

Shaw and Nave frame their conclusion carefully. The Tri-System Theory is not an argument against AI. System 3, used well, genuinely extends human capability — the data shows this clearly in the conditions where the AI was accurate. The problem is not the technology. It is the assumption that the technology can be trusted without the ongoing application of judgment.

The thinking machine does not stop you from thinking by force. It stops you from thinking by making thinking feel unnecessary. It is fluent, fast, and confident. It fills the space where doubt would otherwise live. And in that space — the space between receiving an answer and deciding whether to believe it — something important happens, or fails to happen.

The researchers call that space cognition. We used to call it judgment.

The question is not whether we will use AI. We will, and we should. The question is whether we can build the habits, the systems, and the incentives to keep that space alive — to ensure that the arrival of a machine answer is the beginning of thinking, not the end of it.

AI doesn’t make people worse at thinking — passive use does.

If you hand over the question, you lose the process.

If you use it to challenge, test, and refine your own thinking, it actually expands it.

The difference isn’t the tool.

It’s whether you’re outsourcing cognition or sharpening it.