The Decomposition Gap

Why computational thinking — not prompting — is the leverage skill of the AI era.

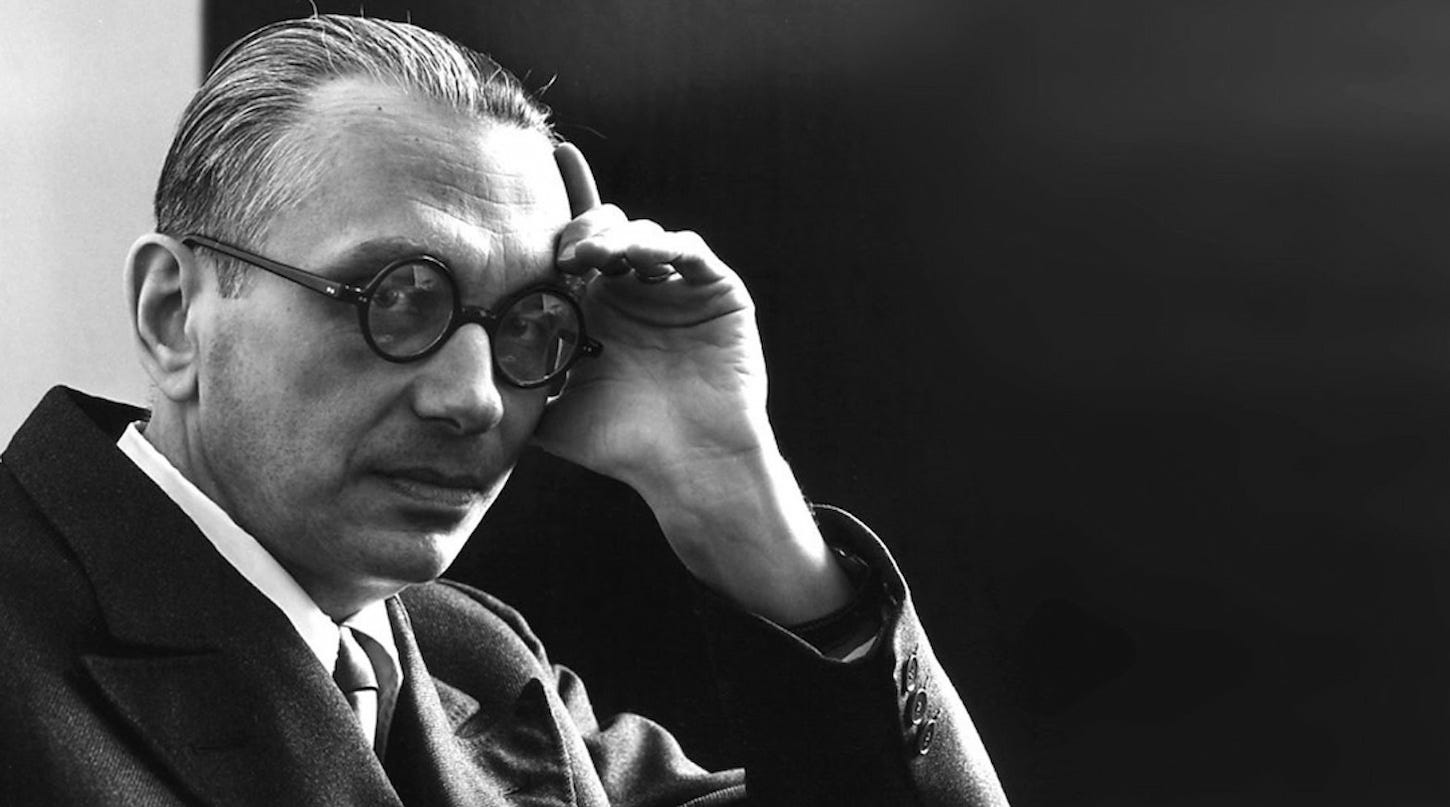

In March 2006, two and a half years before the iPhone shipped and sixteen years before ChatGPT, Jeannette Wing published a three-page essay in Communications of the ACM that has aged better than almost anything else written about computers in that decade. Computational thinking, she wrote, “involves solving problems, designing systems, and understanding human behavior, by drawing on the concepts fundamental to computer science.” It was, she insisted, “a fundamental skill for everyone, not just for computer scientists. To reading, writing, and arithmetic, we should add computational thinking to every child’s analytical ability.”

Wing was careful about what she meant and, more importantly, what she didn’t mean. Computational thinking, she wrote, “is conceptualizing, not programming.” It is “a way that humans, not computers, think.” It is concerned with “ideas, not artifacts.” In 2006, the distinction was pedagogical. In 2026, after three years in which large language models have devoured the artifact layer of our work, it has become the entire game.

This series argues something specific. It is not that AI cannot think, or that human cognition is sacred, or that the models will plateau. The argument is narrower and, I think, more useful: computational thinking is the cognitive architecture that separates people who get an order of magnitude more from generative AI from those who get less than they would working alone. The skill is not prompting. The skill is the one Wing described.

The evidence: feeling faster, actually slower

In July 2025, researchers at METR — the Model Evaluation & Threat Research organization — published the results of a randomized controlled trial that should have received more attention than it did. They recruited sixteen experienced open-source developers with deep familiarity with their own repositories (averaging 23,000 GitHub stars) and assigned them 246 real tasks drawn from those projects. For each task, they randomly permitted or forbade the use of early-2025 AI tools — primarily Cursor Pro with Claude 3.5 and 3.7 Sonnet.

Before the study, the developers forecast that AI would reduce their completion time by 24%. After the study, they believed it had reduced it by 20%. The actual measured result: when AI was allowed, the same developers took 19% longer to complete similar tasks.

The gap between perception and reality is the interesting part. These were not hobbyists. They were experts working in code they had written themselves, using frontier tools, and they were wrong about the effect's direction. They felt faster because the tools produced fluent, plausible output quickly. Shipping that output — actually getting it into a repository they were willing to maintain — took longer than doing the work directly.

The METR finding does not stand alone, but neither does it generalize uncritically. Brynjolfsson, Li, and Raymond’s landmark study of 5,172 customer-support agents at a Fortune 500 software company found a very different pattern: access to a generative AI assistant increased issues resolved per hour by roughly 15% on average, with about 34% gains for novice and low-skilled workers and essentially no measurable gain for the most experienced.

Put the two studies side by side, and the picture sharpens. Generative AI does not uniformly raise or lower productivity. It raises a floor for people below a certain skill threshold, and it can lower the ceiling for people above it — especially when the work requires sustained reasoning about a system the model cannot see. What the two populations differ on is not access to tools. It is whether the human brings a deliberate structural approach to the problem before invoking the model.

I will call this measurable delta the Decomposition Gap: the performance difference between users who structure a problem before prompting and those who prompt-and-pray.

What computational thinking actually is

Wing’s original formulation identified four moves that, twenty years on, still cover most of the territory:

Decomposition — breaking a large, ill-defined problem into smaller, tractable sub-problems.

Abstraction — choosing a representation that preserves what matters and discards what doesn’t. Wing called this “choosing an appropriate representation for a problem or modeling the relevant aspects of a problem to make it tractable.”

Pattern recognition — seeing that a new problem is isomorphic to one already solved, so that an existing solution can be reused or adapted.

Algorithmic design — specifying the flow of steps, decisions, and data that takes a problem from input to resolution.

To these four, I want to add a fifth, which the generative-AI era has made unavoidable:

Verification — evaluating, from outside the generating system, whether an output is correct, complete, and safe.

Verification was always implicit in the other four; you cannot decompose well without some way of checking that the pieces compose back together. But when the generator was a human — you, or a colleague, or a well-understood library — verification happened mostly tacitly. When the generator is a model that produces confident, fluent, and often subtly wrong output at a rate no human reviewer can naturally match, verification becomes its own discipline. It is the move this series will argue for most strongly, and it is where Part 2 will live.

A brief Gödelian aside

It is worth pausing once to consider why verification is a separate act rather than just more generation.

In 1931, Kurt Gödel proved that any consistent formal system rich enough to express ordinary arithmetic contains true statements that cannot be proved from within the system itself. A system’s limits cannot, in general, be seen from inside it; establishing them requires stepping outside.

Large language models are not formal systems in Gödel’s sense, and I am not claiming a mathematical identity. What I am claiming is a structural resonance. A model trained to predict plausible text continuations cannot, within the same predictive process, reliably certify that its output is true, complete, or operating within the distribution on which it was trained. The generator’s confidence is produced by the same machinery as the generation. Checking it requires a vantage point that the generator lacks.

That vantage point is what the human brings. The five moves of computational thinking collectively constitute the discipline of thinking from outside the system that produces the work.

The verification ceiling in practice

This is not abstract. Anthropic — a company with more incentive than most to make models look self-sufficient — has published unusually candid engineering notes on what happens when AI agents try to evaluate their own output.

In their field guide, Demystifying evals for AI agents, the team writes that agent evaluations are “complex because agents use tools across many turns, modifying state and adapting as they go, which means mistakes can propagate and compound.” They describe an episode during development in which a model appeared to score only 42% on a benchmark until an engineer, reading transcripts by hand, discovered that the graders, not the model, were wrong. After the scaffolding was fixed, the measured score jumped to 95%. The model had not improved. The human had distinguished “the agent got it wrong” from “our evaluation got it wrong” — a distinction that requires computational thinking about the evaluation system itself, not just about the task.

A companion Anthropic post on harness design for long-running agents is equally direct about failure modes: models “tend to lose coherence on lengthy tasks as the context window fills,” and robust operation depends on human-designed scaffolding for decomposition, checkpointing, and recovery. The agent does the generating. The human does the structuring that makes generating useful.

The stakes of this asymmetry extend beyond benchmarks. In August 2025, Anthropic disclosed that agentic AI had been weaponized in sophisticated cyberattacks — not as an advisor, but as an operational participant. Whatever one thinks of the defensive posture, the episode underlines a point the productivity numbers only hint at: as models become more capable of taking action, the human capacity to specify, bound, and verify what those actions should be becomes more load-bearing, not less.

The contrarian turn: prompting described a surface, not a skill

For about eighteen months between 2023 and 2024, “prompt engineering” was marketed as the new literacy. Job postings appeared. Short courses proliferated. The framing was seductive because it suggested a bounded, learnable skill that sat neatly atop the existing tools.

The framing described a surface, not a skill. The text you type is an artifact; the cognitive work that produces it — or fails to — is what determines whether the model helps you. The METR developers were using state-of-the-art tooling and well-formed prompts, yet they were still 19% slower because the limiting factor was not prompt syntax but whether the problem had been decomposed into pieces a model could be trusted to handle.

Ethan Mollick, writing in Co-Intelligence, has made a related point from a different angle: the people who extract the most value from current AI are not those with the most clever prompts but those who have integrated the model into a deliberate workflow — what he calls centaur and cyborg patterns of collaboration, in which the human retains responsibility for problem framing and final judgment while delegating specific, bounded sub-tasks. The substance of the integration is computational thinking. The prompt is a byproduct.

The durable claim is this: tools will keep changing. Models will improve, and the improvements will be real. The harnesses, editors, and agent frameworks we use in 2028 will look different from those we use now. The cognitive architecture that makes any of them useful — the discipline of decomposing, abstracting, pattern-matching, designing, and verifying — will not.

Where the series goes from here

This piece has argued, from the evidence now available, that computational thinking is the leverage skill of the AI era, and that the Decomposition Gap is the measurable shape the skill takes in practice. Two further arguments follow.

Part 2, Above the Verification Ceiling, will treat the five moves as a working field manual. Each move gets a definition, a worked example using current-generation models, its characteristic failure mode, and a practice pattern you can apply to your own recent AI-assisted work. The governing motif is the Verification Ceiling: the upper bound on what a generator can know about its own output, and the disciplines by which a human operates above it.

Part 3, Capability Debt, will scale the argument to organizations. If computational thinking is the skill that compounds, then teams that outsource it to models before they have mastered it are accruing a liability — a capability debt that becomes visible only when something the model produces needs to be evaluated, defended, or repaired. The piece will propose concrete changes to how technical hiring, technical education, and team design should respond.

For now, the point to sit with is Wing’s, written before any of this was on the horizon: computational thinking is “a way that humans, not computers, think.” The systems we have built are extraordinary. They cannot see their own limits. The skill of the next decade is learning to think, deliberately, from outside them.