Radiance and Blindness

The Halo Effect in the 21st Century

Scroll through Instagram for thirty seconds. You will encounter faces that are symmetrical, luminous, impossibly smooth — faces that have been quietly sculpted by filters, lighting algorithms, and AI-powered beauty tools. Now ask yourself honestly: did you trust those faces a little more? Did they seem, somehow, more competent? More worth listening to?

If you answered yes, you are not shallow. You are human. And you are caught in one of the oldest, most pervasive, and most consequential cognitive traps ever identified by science: the Halo Effect.

A century after its discovery, the Halo Effect is not just alive — it is thriving, mutating, and scaling. It has colonized our hiring pipelines, our social media feeds, our medical consultations, and now, perhaps most dangerously of all, our artificial intelligence systems. Understanding it is no longer a matter of personal self-improvement. It is a matter of social justice, digital literacy, and democratic accountability.

Part I: The Ghost of Edward Thorndike

The year was 1920. American psychologist Edward Lee Thorndike published a deceptively modest paper titled “A Constant Error in Psychological Ratings.” In it, he described something he had observed while studying how military officers evaluated their subordinates: when a soldier was rated highly on one trait — say, physical appearance — officers systematically rated him higher on all other traits, including intelligence, leadership, and reliability. The reverse was equally true. One negative impression cast a shadow over every subsequent judgment.

Thorndike called this the Halo Effect: the tendency of a single positive (or negative) impression to radiate outward, contaminating our evaluation of entirely unrelated characteristics.

The mechanism is elegant in its simplicity. The human brain is a prediction machine, constantly under evolutionary pressure to make fast, efficient decisions with incomplete information. Assessing a stranger across every possible dimension is metabolically expensive and cognitively slow. So the brain cheats. It takes one strong signal — beauty, a firm handshake, an expensive suit, a confident voice — and extrapolates from it. This person seems good in one way. Therefore, they are probably good in many ways.

For most of human evolutionary history, this shortcut was useful. In small communities where reputation was earned slowly and information was scarce, a person’s appearance and bearing genuinely correlated with their social standing and reliability. The halo was a rough but functional heuristic.

In the 21st century, it is a catastrophe.

Part II: The Science Is Still Coming In

One might assume that a phenomenon identified over a century ago would be well-understood, largely mapped, and perhaps even partially corrected. One would be wrong.

Research into the Halo Effect is accelerating, not decelerating — because modern life keeps generating new contexts in which it operates, and new tools with which to measure it.

A January 2026 study published in Frontiers in Psychology examined what researchers called the “Happiness Halo Effect” in workplace settings. The findings were striking: employees who displayed consistent markers of happiness — smiling frequently, expressing enthusiasm, using positive language — were systematically overestimated by colleagues and managers in their actual competence, productivity, and problem-solving ability. This was true even when objective performance metrics told a different story. A happy employee who missed deadlines was evaluated more generously than a quieter, more reserved colleague who consistently delivered.

The implications for performance reviews, promotions, and workplace culture are profound. We are not rewarding merit. We are rewarding radiance.

Meanwhile, a landmark 2024 study by the Royal Society revisited the classic finding that “what is beautiful is still good” — a phrase coined in the 1970s to describe how physical attractiveness bleeds into judgments of moral character, intelligence, and social worth. The new research introduced a critical 21st-century twist: participants were explicitly informed that the people they were evaluating had used Instagram beauty filters and AI photo-editing tools. They were told, in plain language, that the images were artificially enhanced.

It did not matter.

Participants still rated the filtered faces as more trustworthy, more competent, and more likable. The intellectual knowledge that the image was manipulated failed to override the emotional and cognitive response to perceived attractiveness. Once activated, the halo is remarkably resistant to correction.

This finding is more than a curiosity. It is a warning.

Part III: The Social Wound — How the Halo Effect Reproduces Inequality

The Halo Effect does not operate in a vacuum. It operates in societies that already have hierarchies of race, gender, class, body size, age, and disability. And what it does, with quiet efficiency, is amplify those hierarchies.

Consider the labor market. Decades of research have documented that taller candidates are more likely to be hired and promoted. Attractive candidates command higher starting salaries — an advantage economists have dubbed the beauty premium, estimated at 10-15% over a lifetime of earnings. Well-dressed candidates are assumed to be more competent before they speak a single word.

None of this is legal. None of this is rational. All of this is real.

The data on race is particularly damning. Studies using identical CVs sent to employers — with names randomly assigned to signal either a white or a Black applicant — have consistently shown that white-sounding names receive significantly more callbacks. But the Halo Effect adds another layer. Once a candidate from a marginalized group does make it to an interview, they face a compounded challenge: any single perceived weakness — a nervous pause, an unfamiliar accent, a less-than-perfect handshake — can trigger the Horn Effect, the Halo Effect’s dark twin, in which one negative impression corrupts all subsequent evaluation.

The Halo Effect, in this context, is not merely a cognitive bias. It is a mechanism of structural discrimination. It does not create inequality, but it locks it in place, giving it the false patina of meritocracy.

The same dynamic plays out in medicine. Studies have shown that overweight patients are more likely to have their symptoms dismissed or attributed to their weight, regardless of the actual diagnosis. Attractive patients receive more thorough consultations. Well-spoken patients — those who use medical vocabulary and project confidence — are taken more seriously. The halo of appearance and articulation determines, in part, the quality of care you receive. In some cases, it determines whether you live or die.

In politics, the effect is on full display every election cycle. Numerous studies have shown that physically attractive political candidates receive more votes, are perceived as more competent and trustworthy, and command greater media attention — even when voters cannot identify a single policy position associated with them. In an age of high-definition television and curated social media presences, the political halo has never been brighter, or more blinding.

Part IV: The Digital Halo — When Algorithms Inherit Our Biases

Here is where the story takes its most urgent turn.

For decades, the Halo Effect was a human problem, constrained by human scale. A biased manager could affect dozens of careers. A biased admissions officer could affect hundreds of applications. These were serious harms, but they were bounded.

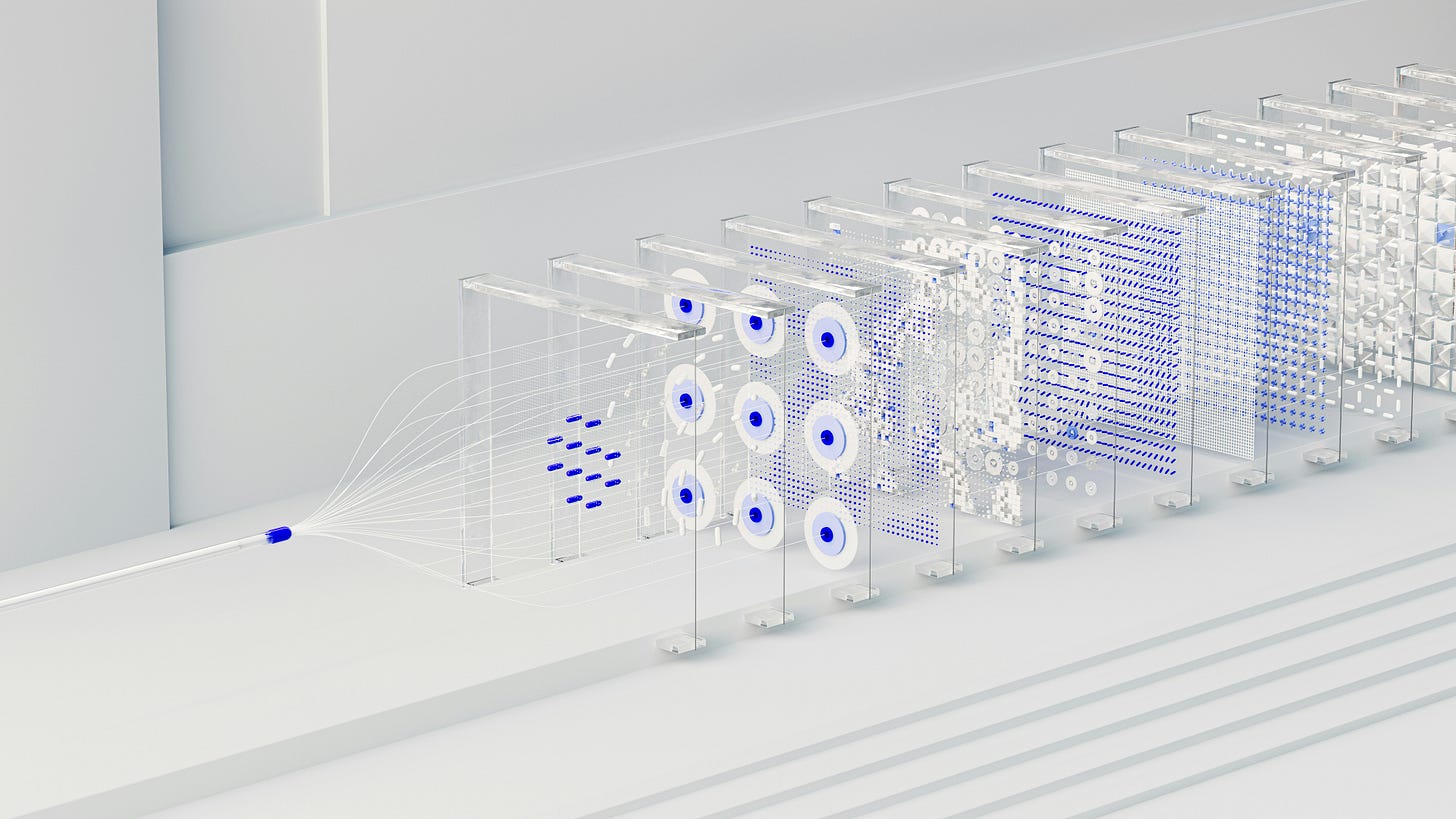

Then we built machines to make decisions for us. And we fed those machines human data.

Machine learning systems learn from patterns in historical data. If that historical data reflects centuries of human bias — and it does — then the patterns the machines learn will reflect that bias too. This is not a theoretical risk. It is a documented reality.

Amazon famously scrapped an internal AI recruiting tool in 2018 after discovering that it systematically downgraded CVs that included the word “women’s” — as in women’s chess club or women’s college — and favored male candidates. Why? Because it had been trained on a decade of Amazon’s own hiring decisions, which, like most tech company hiring decisions, skewed heavily male. The system learned the halo: male-pattern CVs signal competence. Female-pattern CVs signal less competence. It learned this without ever being told. It inferred it from human choices.

This is what researchers call bias laundering: the transformation of human prejudice into algorithmic output, which then acquires the false authority of mathematical objectivity. When a human manager rejects a candidate based on gut feeling, they might be challenged. When an algorithm rejects a candidate based on a score, the decision feels scientific, neutral, and final.

The Large Language Model (LLM) era has introduced an entirely new dimension of the Halo Effect. When users interact with AI systems like the ones powering today’s most advanced chatbots and writing assistants, they bring their own halo biases to the interaction. Research has shown that responses written in fluent, confident, grammatically impeccable prose are rated as more accurate, more insightful, and more trustworthy — even when their content is factually wrong. The eloquence creates a halo. The halo occludes the error.

Conversely, a 2025 German study on consumer perception of AI-generated advertising documented a striking negative Halo Effect: when consumers were informed that an advertisement had been created by AI, their skepticism about the ad’s authenticity transferred immediately to the product itself. The product was judged as lower quality, less trustworthy, and less desirable — not because anything about the product had changed, but because the halo had been replaced by a shadow.

This inverse effect — call it the AI Horn Effect — has significant implications for how companies communicate about their use of AI technologies, and more broadly, for how societies will come to evaluate AI-generated content in every domain, from news to art to scientific papers.

We are, in other words, applying the same ancient cognitive shortcut to machines that we apply to people. We judge the container, not the content. We judge the style, not the substance. We judge the glow.

Part V: Can We Break the Spell?

The question everyone asks after learning about cognitive biases is: “Now that I know about this, can I stop doing it?”

The uncomfortable answer, which the Royal Society study makes viscerally clear, is: mostly no.

Awareness of a bias does not automatically correct for it. This is one of the most replicated and most humbling findings in cognitive psychology. You can know, intellectually and completely, that a job candidate’s height is irrelevant to their programming ability, and still unconsciously favor the taller applicant. The bias operates below the threshold of conscious intention.

This does not mean we are helpless. It means that individual willpower is the wrong tool for a structural problem.

What actually works — at least partially — is redesigning the systems in which decisions are made, so that the moments of bias have less opportunity to operate.

Structured interviewing — in which every candidate is asked exactly the same questions in exactly the same order, evaluated on a predefined rubric, by interviewers who have been briefed on bias — significantly reduces the influence of the Halo Effect compared to unstructured, conversational interviews. It is not perfect. Nothing is. But the data consistently shows it is better.

Blind review processes — in which identifying information (names, photographs, institutional affiliations) is removed before evaluation — have been shown to increase diversity in hiring, publication, and artistic grant allocation. Orchestras that introduced blind auditions, with candidates playing behind a screen, saw a marked increase in the hiring of female musicians.

Algorithmic auditing — the rigorous, ongoing examination of AI systems for discriminatory patterns — is increasingly being mandated by legislation, including the EU’s AI Act, which came into force in stages from 2024 onward. This is a necessary step, though critics rightly point out that auditing is only as good as the metrics used, and that defining fairness in algorithmic systems is itself a deeply contested philosophical and political question.

In the domain of social media and digital communication, some platforms are experimenting with removing visible like counts or delaying engagement metrics to reduce the halo of popularity — the tendency of a post with many likes to be judged as more credible and more worthy of further engagement, regardless of its actual content.

These interventions matter. They are worth pursuing. But we should be clear-eyed about their limits.

Part VI: The Deeper Problem

The Halo Effect is not a bug in human cognition. It is a feature — an ancient, deeply embedded feature that helped our ancestors navigate a world that no longer exists, operating in a world that has become almost incomprehensibly complex.

We live in a civilization that generates more information in a single day than previous generations encountered in a lifetime. We interact with more strangers in a week than our ancestors met in a year. We are asked to make evaluations — of candidates, of products, of news stories, of scientific claims, of political arguments — at a volume and speed that our brains were simply not designed for.

The halo is our brain’s desperate attempt to cope. And that is precisely what makes it so dangerous. Because we are simultaneously building systems — algorithmic, institutional, political — that operate at scales millions of times larger than any individual human judgment, and we are building them out of human data, human preferences, and human choices. Every one of those choices carries within it the residue of every halo effect that shaped the person who made it.

The result is a world in which our cognitive shortcuts are being industrialized. The biased hiring manager affected a few dozen people per year. The biased hiring algorithm affects millions of applications per day. The politician who won on looks once affected a single constituency. The recommendation algorithm that boosts the visibility of attractive faces affects the information environment for billions.

We are not just making individual mistakes anymore. We are systematizing our mistakes and running them at a planetary scale.

Conclusion: Learning to See in the Glare

In optics, a halo is formed when light passes through ice crystals in the atmosphere and bends, creating a luminous ring around the source. The ring is beautiful. It is also an illusion — a trick of refraction that tells you nothing about the actual nature of the light.

The cognitive halo works the same way. It takes a single signal — beauty, confidence, wealth, eloquence — and bends our perception around it, creating a luminous ring of assumed virtue, assumed competence, assumed trustworthiness. The ring is compelling. It is also, very often, a lie.

A hundred years after Thorndike named it, we have built a civilization that rewards the halo at every level — in our schools, our workplaces, our media, our political systems, and now our machines. We have not overcome the bias. We have embedded it in the infrastructure.

Overcoming it will require more than awareness campaigns and diversity workshops, though those are nothing. It will require structural redesign: of how we hire, how we evaluate, how we build and audit algorithms, how we regulate platforms, and how we teach critical thinking in a world saturated with carefully curated radiance.

It will also require a kind of intellectual humility that does not come naturally to a species that evolved to trust its instincts. The humility to say: the glow I am seeing is not information. It is noise. Let me look past it.

The light is beautiful. But the light can blind you.